Garbage In, Garbage Out

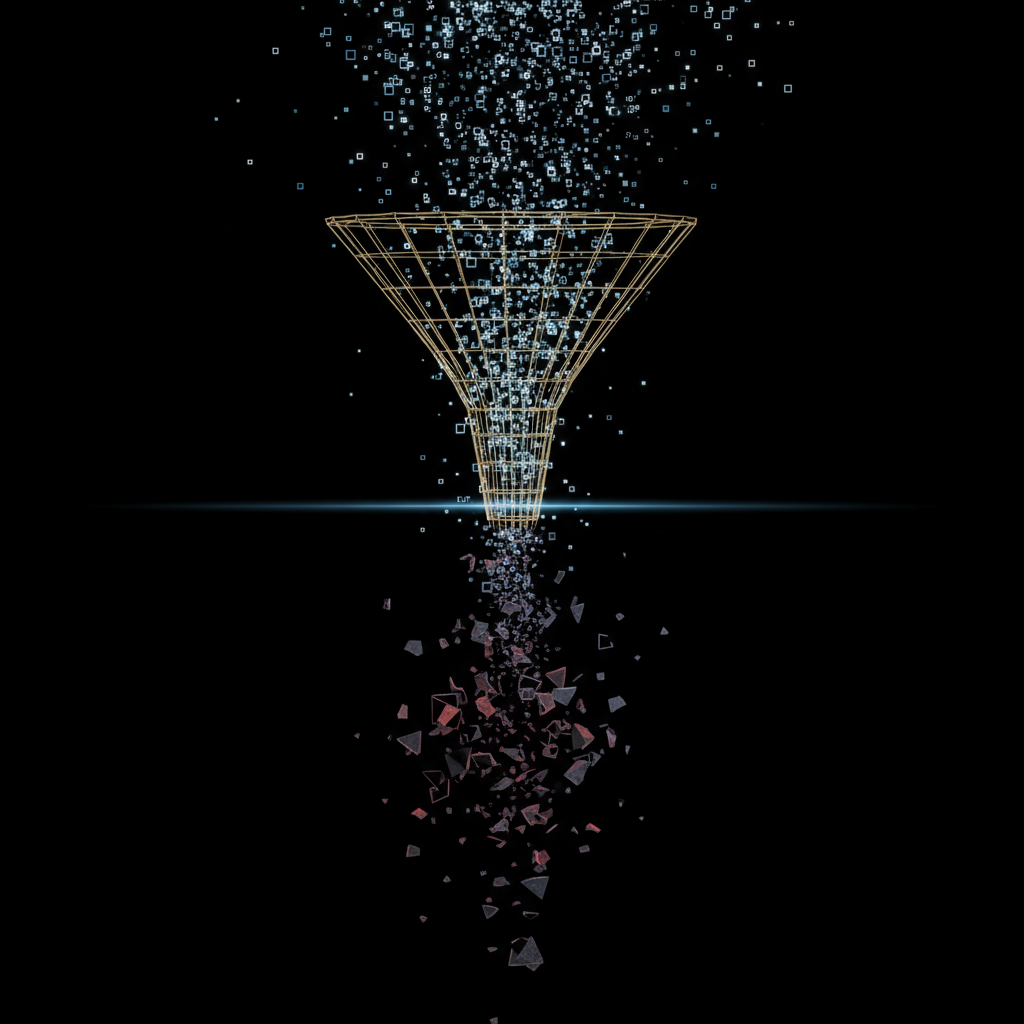

There's an old saying in computing that predates AI by decades: garbage in, garbage out. It was true when it referred to spreadsheets. It was true when it referred to databases. And it is profoundly, dangerously true when it refers to agentic systems — except now the garbage comes out wearing a suit and speaking in complete sentences.

That's the part nobody talks about enough.

The Problem Is Worse Than You Think

With a traditional data pipeline, bad input usually produces obviously bad output. A malformed CSV breaks a parser. A null value throws an exception. A wrong date format produces a visible error. The system screams at you. You fix it. Life goes on.

Agents don't scream. They compensate.

Feed an LLM-powered agent incomplete data, and it won't tell you "hey, I'm missing 30% of the fields I need to do this properly." It will fill the gaps. It will infer. It will generate plausible-sounding content to bridge the holes in its input — and it will do it so smoothly that the output reads better than the input. More complete. More polished. More confident.

That's not analysis. That's fabrication with good grammar.

We ran into this on one of our early projects. A report generation pipeline was producing beautifully written summaries. The client loved them. Then someone cross-referenced a batch of reports against the source data and found that roughly 15% of the "findings" in those reports had no basis in the input. The model had encountered gaps and filled them with what it thought should be there based on pattern matching against its training data.

The outputs weren't random nonsense. They were plausible nonsense. Industry-appropriate terminology, reasonable-sounding conclusions, properly formatted citations to sections that existed in the source docs but didn't actually support the claims being made. If you weren't intimately familiar with the source material, you'd never catch it.

That's when we got serious about data quality.

Where Bad Data Comes From

In our experience, the data quality problems that hurt agentic systems aren't usually the dramatic ones. It's not corrupted files or completely wrong data. It's the subtle stuff:

Incomplete records. A dataset that's 95% populated looks fine at a glance. But if the missing 5% happens to be the fields your agent needs to make a critical determination, you get inference where you should get fact. And the agent won't flag it because from its perspective, it's doing its job — generating output from available input.

Stale data mixed with fresh data. We see this constantly. An archive contains records from three different time periods, but nothing in the data indicates which is current. The agent treats a policy from 2019 the same as one from yesterday. The output looks authoritative but is based on outdated information.

Duplicate records with subtle differences. Two versions of the same entity, slightly different. Maybe one was updated and the other wasn't. Maybe they came from different source systems. The agent processes both and now you have conflicting findings that both look correct.

Format inconsistency. Same field, different formats across records. Dates as MM/DD/YYYY in one batch and DD-MM-YYYY in another. The agent doesn't fail on this — it interprets each one according to whatever pattern it detects, and silently gets some of them wrong.

Context loss. Data extracted from its original context. A number without its unit. A status code without its legend. A reference ID that pointed to something in the source system but means nothing in isolation. The agent will still use it — it just won't use it correctly.

Why Agents Make This Worse, Not Better

Here's what makes agentic systems uniquely vulnerable compared to traditional automation:

Compounding errors across pipeline stages. A traditional script processes data in one step. An agent pipeline might chain five, ten, fifteen stages together. A small data quality issue in stage one — a missing field, an ambiguous value — gets interpreted in stage two, built upon in stage three, and by stage five it's become a load-bearing assumption in the final output. The further down the chain, the harder it is to trace back to the original problem.

Confidence without calibration. When a model fills a gap, it doesn't lower its confidence score. The output comes out with the same authoritative tone whether it's based on solid data or educated guessing. There's no built-in signal that says "I'm less sure about this part." You have to engineer that signal yourself.

The "looks right" trap. Humans are surprisingly bad at catching AI fabrication when the output is well-structured and uses domain-appropriate language. We trust formatting. We trust fluency. We trust completeness. An agent that produces a polished, comprehensive-looking report from incomplete data is actually harder to quality-check than one that produces a rough, gap-filled report — because the gaps have been papered over.

What We Do About It

We've learned to treat data quality as a first-class architectural concern, not a pre-processing step. Some specific things that have made a real difference:

Validate before you process. This is why we built Bouncer. Every file that enters a pipeline passes through focused quality agents before any analysis begins. Format checks, schema validation, completeness scoring, consistency verification against historical data. If a file doesn't meet the quality bar, it gets quarantined — not silently processed with whatever's there.

Make the agent tell you what it doesn't have. In our verification platform, the agent doesn't just evaluate the document — it also produces a "data availability report" listing which fields were present, which were inferred, and which were absent. The final output includes a confidence breakdown that's tied to data completeness, not just model confidence. When a human reviewer sees "3 of 7 required fields were present for this determination," they know to scrutinize that section.

Deterministic checks before LLM judgment. We use a dual-evaluation architecture in several of our systems. The first pass is rule-based: does this field exist? Is this value within expected range? Does this record match the expected schema? Only after the data passes deterministic validation does it go to the LLM for judgment calls. This means the model is never asked to reason about data that hasn't been verified as structurally sound.

Separate "I found this" from "I concluded this." In our pipeline outputs, there's always a clear distinction between extracted data (things the system pulled directly from the source) and derived conclusions (things the system inferred or analyzed). They're formatted differently, labeled differently, and have different confidence treatments. If a reviewer only has five minutes, they know to focus on the derived conclusions.

Track provenance end to end. Every finding in our pipeline outputs links back to the specific source data it came from. Not "this came from document X" but "this came from page 3, paragraph 2 of document X, and here's the extracted text." When something looks suspicious, you can trace it backwards through the entire chain in seconds.

The Uncomfortable Truth

Data quality isn't a sexy problem. Nobody's writing breathless blog posts about input validation. Nobody's raising Series A rounds for schema enforcement. But after building nine production agentic systems, we can say with confidence: the quality of your agent's output is bounded by the quality of its input, and no amount of prompt engineering, model selection, or orchestration sophistication will change that.

If you're building agentic systems and you're spending 80% of your time on the agent logic and 20% on data quality, flip those numbers. Your agents are probably fine. Your data probably isn't.

The unsexy work is the work that matters.